A systematic review is a rigorous, reproducible synthesis of all available research that addresses a specific, pre-defined question. Unlike narrative reviews, a systematic review follows a registered protocol, searches multiple databases comprehensively, screens studies against explicit inclusion criteria, extracts data using standardised forms, and appraises the risk of bias of every included study before synthesising the evidence and reporting the result against the PRISMA 2020 checklist.

Systematic reviews sit at the top of the evidence hierarchy and inform clinical guidelines, health-technology assessments, and public-health policy. The method developed because narrative reviews are vulnerable to selection bias, citation bias, and the reviewer's prior beliefs. Following a published protocol, dual-screening every record, and appraising every included study is the discipline that makes a review trustworthy enough to act on.

Key takeaways

- A systematic review follows a registered protocol, a comprehensive multi-database search, dual screening, structured extraction, risk-of-bias appraisal, and PRISMA 2020 reporting.

- The seven phases (question, protocol, search, screening, extraction, appraisal, synthesis) are sequential but iterative; each phase has documented standards.

- Use PICO for clinical interventions, PECO for exposure questions, and SPIDER for qualitative or mixed-methods questions.

- Search at least three databases (MEDLINE, Embase, CENTRAL) and supplement with CINAHL, PsycINFO, or trial registries as the topic requires.

- Dual independent screening with arbitration is the methodological floor; AI-assisted screening preserves the redundancy at a fraction of the time.

- Use RoB 2.0 for randomised trials and ROBINS-I for non-randomised studies; report the judgement at the outcome level, not just the study level.

- Pre-specify whether you intend to meta-analyse and what synthesis you will fall back to if heterogeneity prevents pooling.

What is a systematic review?

A systematic review is a structured method for identifying, appraising, and synthesising every study relevant to a specific research question, using methods that are pre-specified, registered, and reproducible. The protocol is published before the search begins; the inclusion criteria are explicit; the search covers multiple databases; every record is screened against the criteria; and every included study is appraised for risk of bias.

The defining feature is reproducibility. Two independent teams following the same protocol on the same date should arrive at the same set of included studies and the same risk-of-bias judgements. That standard is what allows a systematic review to support a clinical guideline or a regulatory decision in a way that a narrative literature review cannot. According to the Cochrane Handbook[1], the method exists because human reviewers are vulnerable to selection bias, citation bias, and confirmation bias when choosing which evidence to read and credit.

When to conduct one (and when not to)

A systematic review is the right tool when the research question is clearly defined, when a body of primary studies exists, and when the answer would change clinical practice, policy, or future research. It is the wrong tool for very new fields with little primary evidence, for questions that depend on personal experience or theoretical argument, and for fast-moving topics where the protocol would be obsolete before the review concluded.

- Pick a systematic review when there are at least 5 to 10 primary studies, the outcome is measurable, and the topic is reasonably stable.

- Pick a scoping review when the literature is heterogeneous, the question is broad, or you are mapping a research field rather than estimating an effect.

- Pick a rapid review when the decision is urgent and a methodologically transparent shortcut is acceptable.

- Pick a living systematic review when the evidence base is changing fast and a static review would be obsolete on publication.

Before you commit, run a feasibility check. The Cochrane Handbook recommends a scoping search for an existing or in-progress review (PROSPERO, Cochrane Library, Epistemonikos) and an honest count of likely included studies. A systematic review is a months-long commitment; an hour of feasibility work saves the next twelve.

The seven phases of a systematic review

Every systematic review follows the same seven-phase methodology, codified by the Cochrane Handbook[1] and reinforced by the PRISMA 2020 statement[2]. The phases are sequential but iterative; the protocol may be amended (with the amendment recorded) as new issues surface during execution.

- Formulate the question using PICO, PECO, or SPIDER.

- Develop and register the protocol on PROSPERO or an equivalent registry.

- Search multiple databases and grey-literature sources comprehensively.

- Screen titles, abstracts, and full texts against the protocol's inclusion criteria, with dual independent reviewers.

- Extract data using a standardised form, piloted before use.

- Assess risk of bias in every included study using a domain-appropriate tool (RoB 2.0, ROBINS-I, etc.).

- Synthesise the evidence, narratively or via meta-analysis, and report against the PRISMA 2020 checklist.

Borah and colleagues[3] documented the effort: a typical review takes 1,400 person-hours and 12 to 18 months from protocol registration to publication, with screening and extraction consuming the majority of that time. The phases below describe each step in detail.

Formulating the question (PICO, PECO, SPIDER)

The research question is the most consequential decision in the entire review. A well-formulated question constrains every later phase, from the search strings to the inclusion criteria to the synthesis. Poorly framed questions produce reviews that are either unanswerable (too broad) or trivial (too narrow).

PICO (effectiveness)

The default framework for clinical effectiveness questions. Population, Intervention, Comparator, Outcome. Example: in adults with chronic low back pain (P), does cognitive behavioural therapy (I) compared with usual care (C) reduce pain and disability (O)?

PECO (exposure)

Substitute Exposure for Intervention when the question is about observational evidence. Example: in pregnant women (P), is exposure to fine particulate matter (E) compared with low-pollution settings (C) associated with low birth weight (O)?

SPIDER (qualitative and mixed-methods)

Sample, Phenomenon of Interest, Design, Evaluation, Research type. Used when the question concerns experiences or processes rather than measurable effect sizes.

Once the framework is chosen, draft the question in a single sentence and stress-test it: every word should be defensible, and you should be able to write the inclusion criteria directly from the sentence without inventing new constructs.

Protocol development and PROSPERO registration

The protocol is the binding document. It declares the question, the search strategy, the inclusion and exclusion criteria, the screening procedure, the extraction form, the risk-of-bias tool, the synthesis plan, and the deviations procedure. Registering the protocol on PROSPERO (or a domain-equivalent registry) before the search begins prevents post-hoc rationalisation and lets readers see what you intended to do, not just what you ended up doing.

The PRISMA-P 2015 checklist is the standard structure for the protocol document. Cochrane reviews use the Cochrane Methodological Expectations of Cochrane Intervention Reviews (MECIR) standards. For non-Cochrane reviews, follow PRISMA-P and register on PROSPERO. According to the PRISMA 2020 statement[2], the protocol must be referenced (with registry number) in the published review.

- Protocol amendments are allowed, but each must be recorded with date and rationale, and the published review must list every amendment.

- Pre-specified subgroups belong in the protocol; subgroups added during synthesis are exploratory at best, fishing at worst.

- Pilot work (search-strategy validation, extraction-form piloting) should be described in the protocol so readers can judge the form's reliability.

Comprehensive search across multiple databases

The search strategy is the foundation of every later phase: a study you do not find cannot be screened. Cochrane recommends a minimum of three databases for clinical questions (MEDLINE, Embase, CENTRAL), with CINAHL, PsycINFO, regional databases, and trial registries added as the topic warrants. Single-database searches systematically miss eligible studies and are not defensible.

The search string should combine controlled vocabulary (MeSH in MEDLINE, Emtree in Embase) with free-text terms in the title and abstract, joined by Boolean operators. Date limits, language limits, and study-design filters all narrow the search and must be justified in the protocol. The PRESS 2015 checklist[4] provides a structured peer review for the search string itself; a librarian-validated search is the gold standard.

Document everything. PRISMA-S, the PRISMA extension for searching, requires reporting the database name, vendor, search dates, full search string, and number of records retrieved per source, alongside any supplementary searching (citation-tracking, reference-list-checking, contact with authors). Deduplicate before screening.

Screening: titles, abstracts, and full texts

Screening is the most time-intensive phase of a systematic review, accounting for roughly 40 to 60 per cent of total researcher hours. It happens in two stages: a title-and-abstract pass (broad, fast) followed by a full-text pass on the survivors (slower, judgement-heavy). Cochrane and the Joanna Briggs Institute both recommend dual independent screening with arbitration by a third reviewer, on the basis that single-screen workflows miss roughly 5 to 13 per cent of eligible studies.

AI-assisted screening preserves the dual-reviewer redundancy while cutting human time by an order of magnitude. The model handles the obvious excludes; the human handles the borderline calls and the disagreements. Validation work across 14 reviews demonstrates 97.3 per cent sensitivity for AI screening at this scale, which is competitive with human dual-screening[5].

Whichever method you choose, the inclusion criteria must be operationalised before screening starts. Pilot the criteria on 50 to 100 records, calculate inter-rater agreement (Cohen's kappa, target ≥ 0.6), and refine ambiguous criteria before moving to the full set. Record every excluded full-text study with a reason; the PRISMA 2020 flow diagram requires it.

Data extraction

Data extraction converts the included studies into a structured table the synthesis can act on. The extraction form is built from the protocol's data items and should be piloted on 5 to 10 studies before the full extraction begins. Cochrane recommends dual independent extraction for outcome data, with single extraction acceptable for study characteristics where the risk of error is lower.

The extraction form typically captures: study identifiers (author, year, country, setting), population (sample size, demographics, inclusion criteria), intervention (dose, duration, comparator), outcomes (definition, measurement instrument, follow-up points), effect estimates (means, standard deviations, event counts, risk ratios with confidence intervals), and any information needed for the risk-of-bias assessment.

- Numeric outcomes need the point estimate, the dispersion (SD, SE, IQR), and the sample size at every measured time point.

- Dichotomous outcomes need event counts in each arm. Where only proportions are reported, calculate the count yourself or contact the authors.

- Time-to-event outcomes need the hazard ratio with confidence interval. Reconstruct from Kaplan-Meier curves only as a last resort, and document the method.

- Adverse events need a defined denominator and a defined event list; ad-hoc lists are not extractable.

Risk of bias and quality assessment

Risk-of-bias assessment is the structured judgement of how much a study's design, conduct, and reporting could have distorted its findings. The judgement is made per-outcome, not per-study, because a trial may be at low risk for the primary outcome and high risk for an adverse-event outcome reported only at follow-up.

- RoB 2.0[6] is the current Cochrane tool for randomised trials. It assesses five domains (randomisation, deviations from intended interventions, missing outcome data, measurement of the outcome, selection of the reported result) with signalling questions and an overall judgement of low, some concerns, or high risk.

- ROBINS-I[7] is the Cochrane tool for non-randomised studies of interventions. Seven domains, including confounding and selection of participants. Demands more judgement than RoB 2.0.

- QUADAS-2 is the tool of choice for diagnostic accuracy studies.

- Newcastle-Ottawa Scale is widely used for cohort and case-control studies, though increasingly replaced by ROBINS-I where the resources allow.

Whichever tool you use, dual independent assessment with arbitration is the standard. Record both reviewers' judgements and the resolution; these go into the PRISMA 2020 flow diagram and the supporting documentation.

Synthesis and (when appropriate) meta-analysis

Synthesis is where the included studies are combined into an answer. Two routes: quantitative synthesis (meta-analysis) when the included studies are clinically and methodologically comparable, or structured narrative synthesis (the SWiM framework) when they are not. The choice should be pre-specified in the protocol, with a fallback in case heterogeneity prevents pooling.

Meta-analysis pools effect estimates across studies to produce a weighted summary with greater precision than any single trial. Its validity rests on the inputs being comparable; the statistical machinery does not repair clinical heterogeneity. See the dedicated meta-analysis guide for effect-size measures, fixed-effect versus random-effects models, forest-plot interpretation, heterogeneity statistics, subgroup analysis, and publication-bias diagnostics.

Structured narrative synthesis under the SWiM framework is the honest answer when pooling is inappropriate. It requires explicit grouping, comparison, and interpretation rules, not free-form prose. The result is still a publishable review; it just answers the question with a structured summary rather than a pooled estimate.

PRISMA 2020 reporting

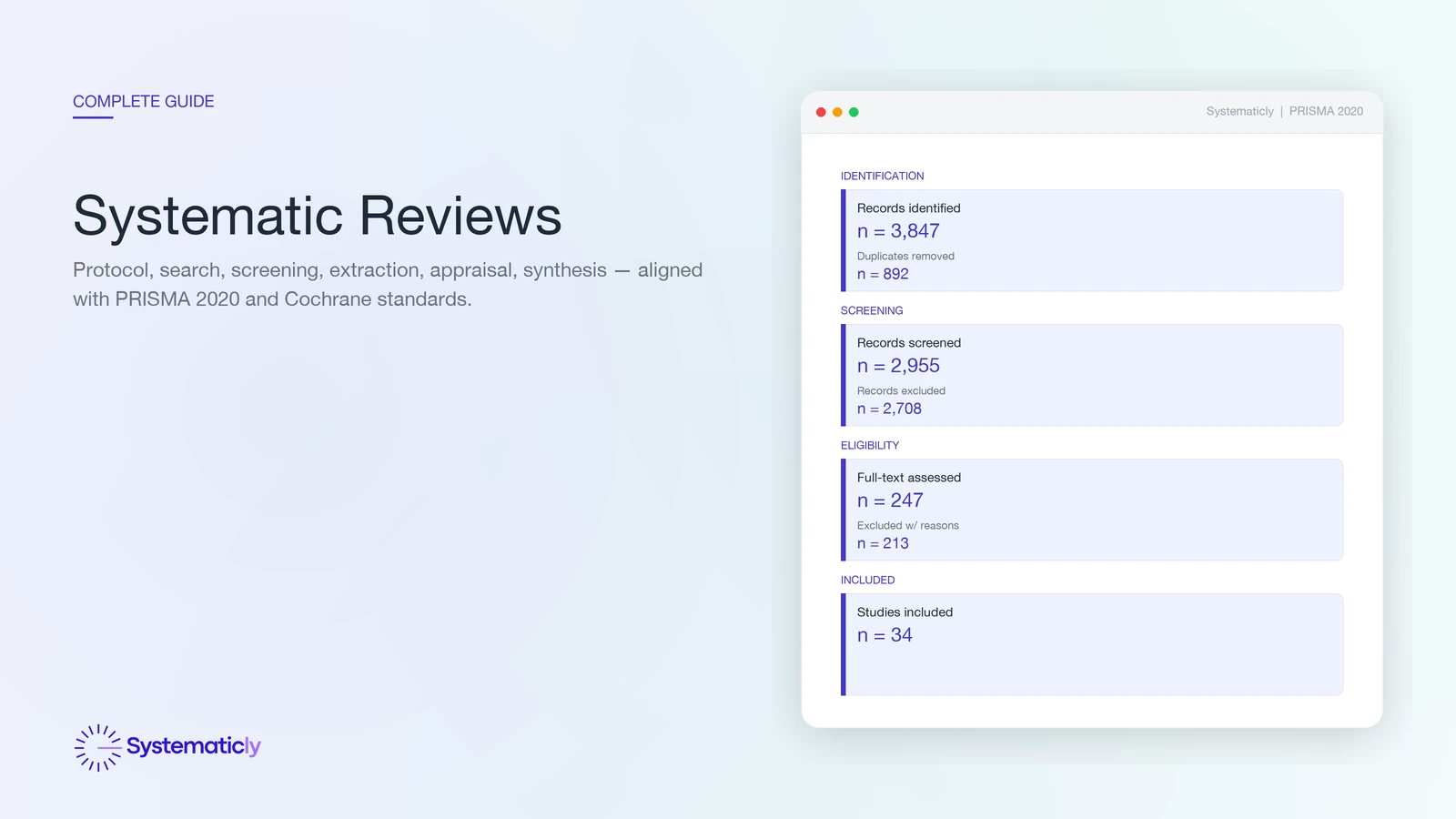

PRISMA 2020 is the current reporting standard for systematic reviews and meta-analyses[2]. The checklist has 27 items across the title, abstract, introduction, methods, results, discussion, and supplementary sections. The flow diagram visualises the four phases (identification, screening, eligibility, included) with record counts at every transition and reasons for every full-text exclusion.

Compared to PRISMA 2009, PRISMA 2020 added items for protocol registration, search dates, the use of automation tools, and the certainty-of-evidence framework applied (typically GRADE). The flow diagram now has two variants: one for new reviews (single identification pathway) and one for updated reviews (separate pathways for the previous review and the new search). PRISMA-S handles the search-strategy reporting in detail; PRISMA-NMA covers network meta-analyses.

Most journals require PRISMA 2020 compliance, and many require the completed checklist as a supplementary file. The diagram should be generated from the actual screening data, not redrawn by hand at the end of the review; that is the most common source of inconsistencies between the diagram and the prose.

Frequently asked questions

How long does a systematic review actually take?

A traditional systematic review typically takes 12 to 18 months from protocol registration to publication, with screening and data extraction consuming the bulk of researcher time. AI-assisted workflows can compress that to roughly 3 to 4 months by automating the search, deduplication, and first-pass screening, leaving researchers to focus on judgement-heavy work such as eligibility decisions, risk-of-bias assessment, and interpretation.

What is the difference between a systematic review and a literature review?

A systematic review follows a pre-specified, registered protocol with explicit inclusion criteria, a comprehensive search across multiple databases, dual screening, structured data extraction, and a risk-of-bias appraisal. A narrative literature review has no required structure and selects sources at the author's discretion, so it cannot rule out cherry-picking. Both are useful, but only the systematic review supports evidence-based recommendations.

Do I always need a meta-analysis in my systematic review?

No. A meta-analysis is appropriate only when the included studies are clinically and methodologically comparable enough to pool. Where populations, interventions, comparators, or outcomes differ substantially, a structured narrative synthesis or the SWiM framework is the honest answer. See the dedicated guide on systematic review versus meta-analysis for when each is appropriate.

How many databases should I search?

Cochrane recommends a minimum of three databases for most clinical questions, typically MEDLINE/PubMed, Embase, and the Cochrane Central Register of Controlled Trials (CENTRAL). Add CINAHL for nursing and allied-health questions, PsycINFO for behavioural-science questions, and a regional or trial-registry source where relevant. Single-database searches systematically miss eligible studies and should be avoided.

Should every record be dual-screened?

Cochrane and the Joanna Briggs Institute both recommend dual independent screening at title, abstract, and full-text stages, with a third reviewer arbitrating disagreements. Single-screen workflows have been shown to miss roughly 5 to 13 per cent of eligible studies. AI-assisted dual screening keeps the redundancy while reducing the human hours per record.

What changed between PRISMA 2009 and PRISMA 2020?

PRISMA 2020 expanded the checklist from 27 to 27-plus-subitems and revised the flow diagram to reflect modern review practice, including separate pathways for register-based searches, citation searching, and updates of existing reviews. Several reporting items now ask for protocol registration details, search dates, automation tools used, and the certainty-of-evidence framework applied.

Can I update an existing systematic review?

Yes. Updating an existing review is often more efficient than starting fresh. The PRISMA 2020 update extension and the Cochrane Living Systematic Review framework provide templates for re-running the search, screening only new records, and reporting how the conclusions have changed. AI screening is particularly well-suited to updates because the existing inclusion criteria can train the model.

If you are scoping or running a systematic review, Systematicly can compress the mechanical work without compromising the methodology. The platform handles the search, deduplication, screening, extraction, appraisal, and PRISMA reporting; you stay in control of every judgement. Start a free project at research.systematicly.com to try it on your protocol.

Summary

A systematic review is a registered, reproducible synthesis of all relevant primary studies, executed in seven phases (question, protocol, search, screening, extraction, appraisal, synthesis) and reported against PRISMA 2020. Each phase has a documented standard; the discipline is what makes the review trustworthy. Systematicly automates the mechanical work in every phase so the human hours go to the judgement calls.

Ready to run your systematic review in one place?

Systematicly handles the full pipeline, from multi-database search to PRISMA 2020 reporting, with AI-assisted screening, dual-AI extraction, and a flow diagram that builds itself. See your first screening pass in minutes.

References

- Higgins JPT, Thomas J, Chandler J, Cumpston M, Li T, Page MJ, Welch VA (editors). Cochrane Handbook for Systematic Reviews of Interventions. Version 6.4. Cochrane. 2023. ↑

- Page MJ, McKenzie JE, Bossuyt PM, et al. The PRISMA 2020 statement: an updated guideline for reporting systematic reviews. BMJ. 2021;372:n71. ↑

- Borah R, Brown AW, Capers PL, Kaiser KA. Analysis of the time and workers needed to conduct systematic reviews of medical interventions using data from the PROSPERO registry. BMJ Open. 2017;7(2):e012545. ↑

- McGowan J, Sampson M, Salzwedel DM, Cogo E, Foerster V, Lefebvre C. PRESS Peer Review of Electronic Search Strategies: 2015 Guideline Statement. Journal of Clinical Epidemiology. 2016;75:40-46. ↑

- Bishop M, Chen S. AI-Assisted Abstract Screening Achieves 97.3% Sensitivity Across 14 Systematic Reviews: A Validation Study. Systematicly Journal. 2026. ↑

- Sterne JAC, Savovic J, Page MJ, et al. RoB 2: a revised tool for assessing risk of bias in randomised trials. BMJ. 2019;366:l4898. ↑

- Sterne JA, Hernan MA, Reeves BC, et al. ROBINS-I: a tool for assessing risk of bias in non-randomised studies of interventions. BMJ. 2016;355:i4919. ↑